Question about Pixels

Sep 18, 2021 11:40:19 #

RichKenn

Loc: Merritt Island, FL

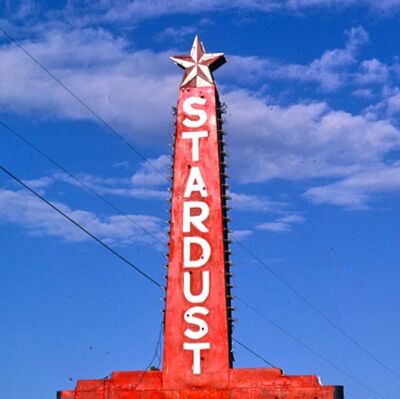

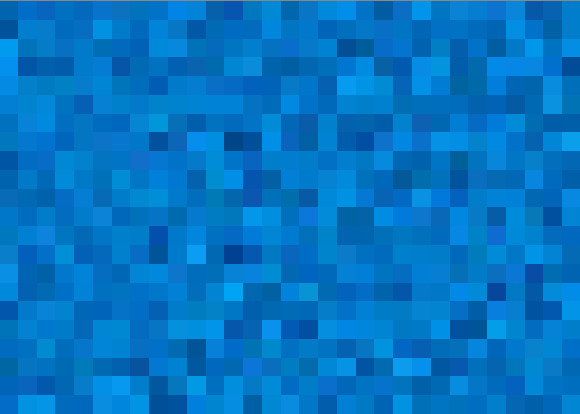

I have taken many pictures of clear blue sky. If I blow up a small section of that sky, enough to see the pixels, I discover a random pattern of quite a variation of the color of individual pixels. See the attached sample. What I am wondering is, why aren't the individual pixels closer to the same color?

Sep 18, 2021 11:58:01 #

RichKenn wrote:

I have taken many pictures of clear blue sky. If I blow up a small section of that sky, enough to see the pixels, I discover a random pattern of quite a variation of the color of individual pixels. See the attached sample. What I am wondering is, why aren't the individual pixels closer to the same color?

Can you post the original, without the crop and possibly without any editing? Also, this appears to be more than 100% resolution. When posting the original, be sure to store the attachment.

Sep 18, 2021 12:02:43 #

Because every square inch of the sky is not transmitting/reflecting the same amount of light in the same wavelengths? The camera sensor can pick it up, but we can't.

Sep 18, 2021 12:08:29 #

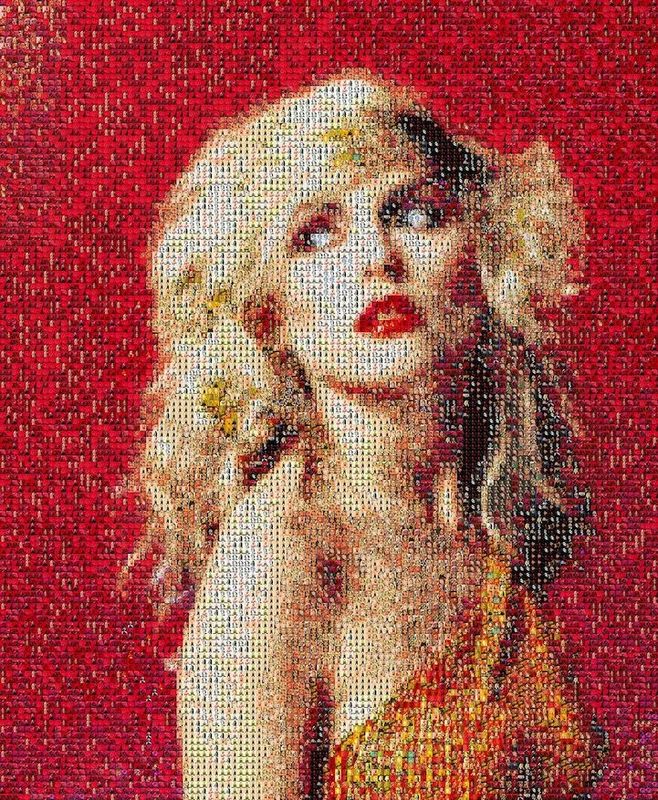

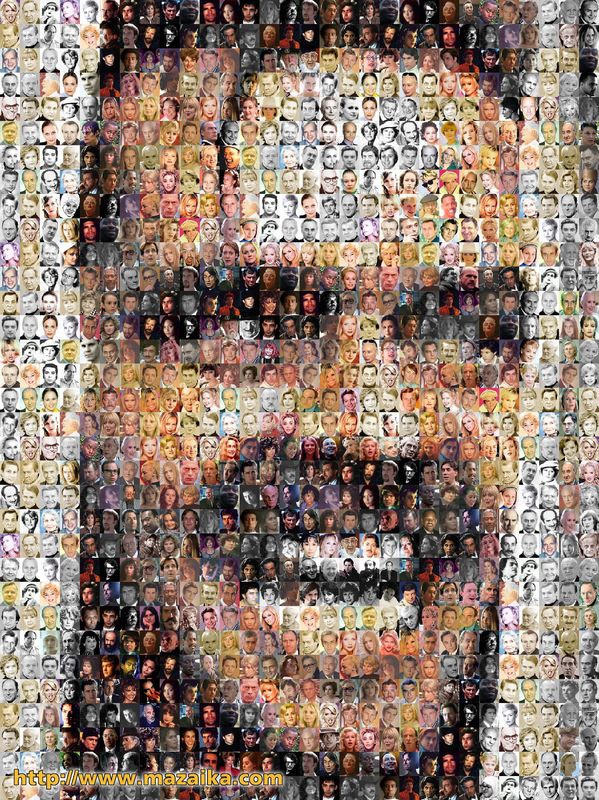

That's the camera's attempt to represent the sky the only way it can - with those tiny squares. It's similar to the way a newspaper prints pictures - lots of tiny dots. Zoom in close, and you'll see how the dots vary in color. Zoom back out, and you have a normal picture. Below are two examples of pictures made from tiny images. Reduce Jack Nicholson (2nd pic), and it will look almost normal.

What you have is the result of digital photography. Film would have a smoother transition.

There is a program that lets you make a picture from thousands of tiny ones, but I can't recall the name. I can't find the one that I have, but here are a bunch of mosaic programs.

https://www.google.com/search?q=photo+mosaic+programs&rlz=1C1CHBF_enUS925US925&oq=photo+mosaic+programs&aqs=chrome..69i57j0i22i30j69i60.5864j0j1&sourceid=chrome&ie=UTF-8

Bonus points: Who remembers the statement that goes with Jack's picture? It's a classic.

What you have is the result of digital photography. Film would have a smoother transition.

There is a program that lets you make a picture from thousands of tiny ones, but I can't recall the name. I can't find the one that I have, but here are a bunch of mosaic programs.

https://www.google.com/search?q=photo+mosaic+programs&rlz=1C1CHBF_enUS925US925&oq=photo+mosaic+programs&aqs=chrome..69i57j0i22i30j69i60.5864j0j1&sourceid=chrome&ie=UTF-8

Bonus points: Who remembers the statement that goes with Jack's picture? It's a classic.

Sep 18, 2021 12:12:46 #

Sep 18, 2021 12:17:04 #

Sep 18, 2021 16:00:45 #

jerryc41 wrote:

That's the camera's attempt to represent the sky t... (show quote)

"I have pictures for teeth

"

"Sep 19, 2021 09:48:10 #

Some/most is probably/could be due to the nature of the Bayer filter. Exact patterns are propietary I believe, but each set of 4 pixels has 1 red, 2 green and 1 blue. They also are still somewhat sensitive to other colors. Reason true black and white cameras show higher resolution for same number of pixels. Splitting out the three channels and comparing should show how much my hypothesis is true versus sky color variation.

Sep 19, 2021 10:24:05 #

RichKenn wrote:

I have taken many pictures of clear blue sky. If I blow up a small section of that sky, enough to see the pixels, I discover a random pattern of quite a variation of the color of individual pixels. See the attached sample. What I am wondering is, why aren't the individual pixels closer to the same color?

It appears that what you are seeing is noise. It's pretty normal for a digital sensor that does not get enough exposure. You can't fix that by raising the ISO.

The sensor reacts to the arrival of photons by accumulating a charge. If there is enough exposure you get plenty photons and of charge and the randomness evens out leaving the sensor with a pretty uniform (noiseless) pattern.

But if the exposure is too low the randomness of the arrival of the photons will result in an uneven distribution of values among adjacent pixels. To make matters worse, only 25% of the pixels in a Bayer array (less in an X-Trans array) are dedicated to blue and the remainder to red and green (there is a lot of overlap).

If you shoot at the lowest practical ISO and maximize the exposure you can minimize the noise.

Sep 19, 2021 12:02:13 #

What our eye perceives after our brain processes (in this case a uniform blue sky) is not what the camera sensors and its "brain" records, varying more or less by aperture, shutter & iso. If both eyes & cameras were identical in most cases there would be no need for PP. <grin>

Sep 19, 2021 13:13:53 #

I find that I have to remind myself that the sensor is an 'analog device' producing an analog voltage signal. In order for the "digital" camera to produce an image from this voltage signal, it must send that analog voltage signal to the analog-to-digital converter which then the camera's microprocessor can create the digital image. With each of the sensors "photosites" sensing the light striking it in minute different ways than those next to it, when processed there will be slightly different variations in the output pixel color.

I suspect that in laboratory conditions with control color targets and known color temperatures that the resulting pixel display can be evaluated for quality control purposes.

I suspect that in laboratory conditions with control color targets and known color temperatures that the resulting pixel display can be evaluated for quality control purposes.

Sep 19, 2021 14:45:06 #

sippyjug104 wrote:

I find that I have to remind myself that the sensor is an 'analog device' producing an analog voltage signal. ....

Yes, and it's responding to the arrival of individual photons, a digital signal.

Sep 19, 2021 14:48:54 #

RichKenn

Loc: Merritt Island, FL

I am not sure I understand, yet but I will keep working on it. Thanks to all for your inputs. I appreciate hearing from you.

Sep 19, 2021 21:49:24 #

RichKenn wrote:

I have taken many pictures of clear blue sky. If I blow up a small section of that sky, enough to see the pixels, I discover a random pattern of quite a variation of the color of individual pixels. See the attached sample. What I am wondering is, why aren't the individual pixels closer to the same color?

Those values are calculated via complex Bayer algorithms from a staggered array of RGGB sensor elements (yes, twice as many green as red or blue).

Always remember, a sensel is not a pixel. But it is used to create several adjacent pixels. Pixels are numbers in a file. They have red, green, and blue brightness levels, but no size. They aren’t physical. They can be represented by monitor phosphors or dye clouds or ink dots that do have sizes.

Sep 19, 2021 22:28:01 #

If you want to reply, then register here. Registration is free and your account is created instantly, so you can post right away.