ISO (Traditional) and ISO (digital)

Oct 16, 2022 02:57:14 #

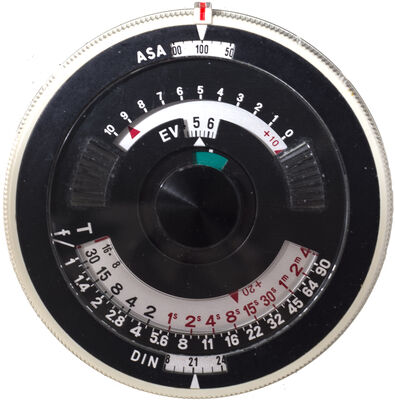

ISO is the international standard for film sensitivity. It is a compromise made from ASA (US standard) and DIN (European standard).

ISO is a fixed number indicating a film sensitivity. When post processed (developed) the result can be modified.

While the manufacturers have been using the same nomenclature today, it is not the same when used with a digital camera.

A camera sensor array (SA), regardless of how recent it is, has a single sensitivity that never changes. What changes is the electrical current used to transform the analog data (light) into digital. The more electricity, the more data is captured under lower light conditions. The cost is signal noise.

The real sensitivity of a SA lies with its ability to capture a range of luminosity. This is referred to as DR. If at first the DR was so limited it made acceptance of digital cameras difficult, this is not the case anymore as the sensors are capable to capture more than a film ever could. There is a drawback…

Grain… Grain is the result of random clustering of chemical on a film, creating an effect that was sometime welcomed for artistic purposes. A digital camera cannot create grain, instead it creates predictable noise* that depends on, among other things, the voltage applied onto the SA.

So why is the term ISO still used? Simply because folks were used to it and since the effect can be simulated, it is used as a setting scale.

When using a JPG output vs a raw file the created data DR changes**. This is why there is a blatant need for cameras to have JPG/raw exposure selection, not file format selection.

Please comment before I post this in the main channel as well as the FAQ.

-----------

* Noise is more accurately described as signal/static noise. I referred to it as 'digital noise'.

** A camera JPG is created from the sensor initial digital capture, a raw file and reduces the DR as well as create a lossy compression.

Credits:

RGG

wham121736

ISO is a fixed number indicating a film sensitivity. When post processed (developed) the result can be modified.

While the manufacturers have been using the same nomenclature today, it is not the same when used with a digital camera.

A camera sensor array (SA), regardless of how recent it is, has a single sensitivity that never changes. What changes is the electrical current used to transform the analog data (light) into digital. The more electricity, the more data is captured under lower light conditions. The cost is signal noise.

The real sensitivity of a SA lies with its ability to capture a range of luminosity. This is referred to as DR. If at first the DR was so limited it made acceptance of digital cameras difficult, this is not the case anymore as the sensors are capable to capture more than a film ever could. There is a drawback…

Grain… Grain is the result of random clustering of chemical on a film, creating an effect that was sometime welcomed for artistic purposes. A digital camera cannot create grain, instead it creates predictable noise* that depends on, among other things, the voltage applied onto the SA.

So why is the term ISO still used? Simply because folks were used to it and since the effect can be simulated, it is used as a setting scale.

When using a JPG output vs a raw file the created data DR changes**. This is why there is a blatant need for cameras to have JPG/raw exposure selection, not file format selection.

Please comment before I post this in the main channel as well as the FAQ.

-----------

* Noise is more accurately described as signal/static noise. I referred to it as 'digital noise'.

** A camera JPG is created from the sensor initial digital capture, a raw file and reduces the DR as well as create a lossy compression.

Credits:

RGG

wham121736

Oct 16, 2022 03:06:36 #

Rongnongno wrote:

ISO is the international standard for film sensiti... (show quote)

Regardless of what one wants to say about sensors ISO in digital is still ISO.

It compares directly to "Film" ISO. As I use the same settings wether it is film or digital for the same exposure.

So if at X ISO a setting is underexposed on film it is exactly the same underexposure in digital. I have made direct comparisons and it is the same, at least for me every time.

For old film cameras with no meter I use the digital camera as the meter.

Oct 16, 2022 06:15:46 #

What Architect has been using I also have done in the past. I have done a shooting with my old Nikon F using one of my digital cameras to find the exposure. Same ISO for both.

Results? Excellent.

Results? Excellent.

Oct 16, 2022 07:46:10 #

There is an ISO standard for setting ISO values in digital cameras:

ISO 19093:2018(en)

Photography — Digital cameras — Measuring low-light performance

ISO 19093:2018(en)

Photography — Digital cameras — Measuring low-light performance

Oct 16, 2022 07:52:02 #

It's a progressive scale of relative sensitivity to light, regardless of the method used to obtain the sensitivity or any resultant side affects.

Oct 16, 2022 07:53:52 #

Oct 16, 2022 07:58:25 #

starlifter wrote:

My head hurts.

Just take a deep breath and say 'The larger the number, the more sensitive."

Oct 16, 2022 08:44:08 #

camerapapi wrote:

What Architect has been using I also have done in the past. I have done a shooting with my old Nikon F using one of my digital cameras to find the exposure. Same ISO for both.

Results? Excellent.

Results? Excellent.

Oct 16, 2022 08:44:34 #

Longshadow wrote:

It's a progressive scale of relative sensitivity to light, regardless of the method used to obtain the sensitivity or any resultant side affects.

Oct 16, 2022 08:51:01 #

zug55

Loc: Naivasha, Kenya, and Austin, Texas

ISO per se is the same in film and digital photography. It is normed to correspond to the settings to the other two sides of the exposure triangle: an increase of the ISO by one step for instance allows you to shorten exposure time by one stop.

There are two pragmatic differences, though. In the film days, the film we used determined the ISO until you were done with the roll. We could not adjust the ISO on a picture-by-picture setting. The second difference is that there is much less noise with digital sensors. An ISO 800 film could produce some noise, while on my Sony mirrorless I start to see the same level of noise at ISO 12800. While the sensitivity is the same, the effect in noise level is dramatically different.

There are two pragmatic differences, though. In the film days, the film we used determined the ISO until you were done with the roll. We could not adjust the ISO on a picture-by-picture setting. The second difference is that there is much less noise with digital sensors. An ISO 800 film could produce some noise, while on my Sony mirrorless I start to see the same level of noise at ISO 12800. While the sensitivity is the same, the effect in noise level is dramatically different.

Oct 16, 2022 08:53:38 #

Jrhoffman75 wrote:

There is an ISO standard for setting ISO values in digital cameras:

ISO 19093:2018(en)

Photography — Digital cameras — Measuring low-light performance

ISO 19093:2018(en)

Photography — Digital cameras — Measuring low-light performance

Did you read it? I want to but it's too expensive for me to do so. It would cost me $120 or so.

Oct 16, 2022 09:02:48 #

Architect1776 wrote:

Regardless of what one wants to say about sensors ISO in digital is still ISO.

It compares directly to "Film" ISO. As I use the same settings wether it is film or digital for the same exposure.

So if at X ISO a setting is underexposed on film it is exactly the same underexposure in digital. I have made direct comparisons and it is the same, at least for me every time.

For old film cameras with no meter I use the digital camera as the meter.

It compares directly to "Film" ISO. As I use the same settings wether it is film or digital for the same exposure.

So if at X ISO a setting is underexposed on film it is exactly the same underexposure in digital. I have made direct comparisons and it is the same, at least for me every time.

For old film cameras with no meter I use the digital camera as the meter.

Oct 16, 2022 09:04:08 #

BebuLamar wrote:

Did you read it? I want to but it's too expensive for me to do so. It would cost me $120 or so.

I didn’t. Bottom line is that it is not an arbitrary set of numbers. They are established to get proper exposure at set light intensity values, same as film. That is why the Sunny 16 rule works the same for film and digital.

Oct 16, 2022 09:48:36 #

Ysarex

Loc: St. Louis

Rongnongno wrote:

A camera sensor array (SA), regardless of how recent it is, has a single sensitivity that never changes.

Correct.

Rongnongno wrote:

What changes is the electrical current used to transform the analog data (light) into digital.

If you're still talking about ISO, ISO is not defined by one of the methods used to implement it. Applying analog gain to the sensor output is one of multiple methods used to implement ISO but that method does not define ISO so it's incorrect to refer to ISO as, "the electrical current used to transform the analog data..."

Rongnongno wrote:

The more electricity, the more data is captured under lower light conditions. The cost is signal noise.

This is incorrect. How much data can be captured is primarily a function of exposure. (Changing ISO does not directly alter exposure which is a function of time (shutter speed) and lens aperture.) Signal processing after exposure can lose data but no amount of processing can add data.

There are numerous sources of noise in a digital photo but most of the noise we see and talk about is shot noise. Read noise in our modern cameras has been effectively removed. If you have an older camera that still generates visible read noise then raising ISO will suppress that noise. Shot noise is in the signal (light) and increases/decreases with exposure. A stronger signal (more exposure) and noise proportionately drops and vice versa. Processing to apply an ISO adjustment neither adds or removes shot noise. By lightening an image ISO processing can reveal -- make more visible -- shot noise.

Rongnongno wrote:

The real sensitivity of a SA lies with its ability to capture a range of luminosity. This is referred to as DR.

Sensitivity and DR are two different things. DR is another topic but it is related to ISO in that the two most common methods of implementing an ISO increase (analog gain applied to the sensor signal or digital scaling) will reduce the available DR.

Rongnongno wrote:

If at first the DR was so limited it made acceptance of digital cameras difficult, this is not the case anymore as the sensors are capable to capture more than a film ever could. There is a drawback…

Grain… Grain is the result of random clustering of chemical on a film, creating an effect that was sometime welcomed for artistic purposes. A digital camera cannot create grain, instead it creates predictable noise* that depends on, among other things, the voltage applied onto the SA.

Grain… Grain is the result of random clustering of chemical on a film, creating an effect that was sometime welcomed for artistic purposes. A digital camera cannot create grain, instead it creates predictable noise* that depends on, among other things, the voltage applied onto the SA.

This sounds too much like ISO is creating noise. It doesn't do that. If the system contains read noise ISO processing will suppress that. Shot noise is in the signal and not created or added by the action of ISO processing.

In a digital camera ISO establishes a method for determining the lightness of the standard output image relative to a measured exposure of the sensor. Implementation of that determined lightness in the output image is not specified and left to the camera manufacturer -- multiple implementation methods are in use.

Rongnongno wrote:

So why is the term ISO still used? Simply because... (show quote)

Oct 16, 2022 10:02:26 #

Jrhoffman75 wrote:

I didn’t. Bottom line is that it is not an arbitrary set of numbers. They are established to get proper exposure at set light intensity values, same as film. That is why the Sunny 16 rule works the same for film and digital.

I didn't read it either but I heard the standard is very broad and allows the manufacturers a lot of leeway so that one manufacturer ISO doesn't have to be the same as others. I heard that there are 5 different ways that camera manufacturers can define their ISO.

If you want to reply, then register here. Registration is free and your account is created instantly, so you can post right away.